Research entry

Physics-Inspired LLM Optimization: 5 Directions, 9 Experiments

Active · March 2026

Testing whether structures from physics — thermodynamics, renormalization group, lattice theory, and mean-field dynamics — can guide LLM compression. Five research directions validated on GPT-2 124M: entropy-based quantization (2.6× better than uniform), RG-guided pruning, lattice attention with a phase transition, GPTQ interaction analysis, and metastable cluster verification.

Motivation

Large language models are expensive to run. The standard approaches to compression — quantization, pruning, efficient attention — rely on heuristics or expensive Hessian computations. But transformers have deep structural connections to physics: attention is Hopfield energy minimization, layer depth maps to renormalization group flow, and token dynamics follow mean-field interacting particle systems.

Can we exploit these physics structures to compress LLMs more intelligently?

This research program tests five physics-inspired optimization directions on GPT-2 124M, with plans to scale to LLaMA-7B.

Direction 1: Thermodynamic Quantization

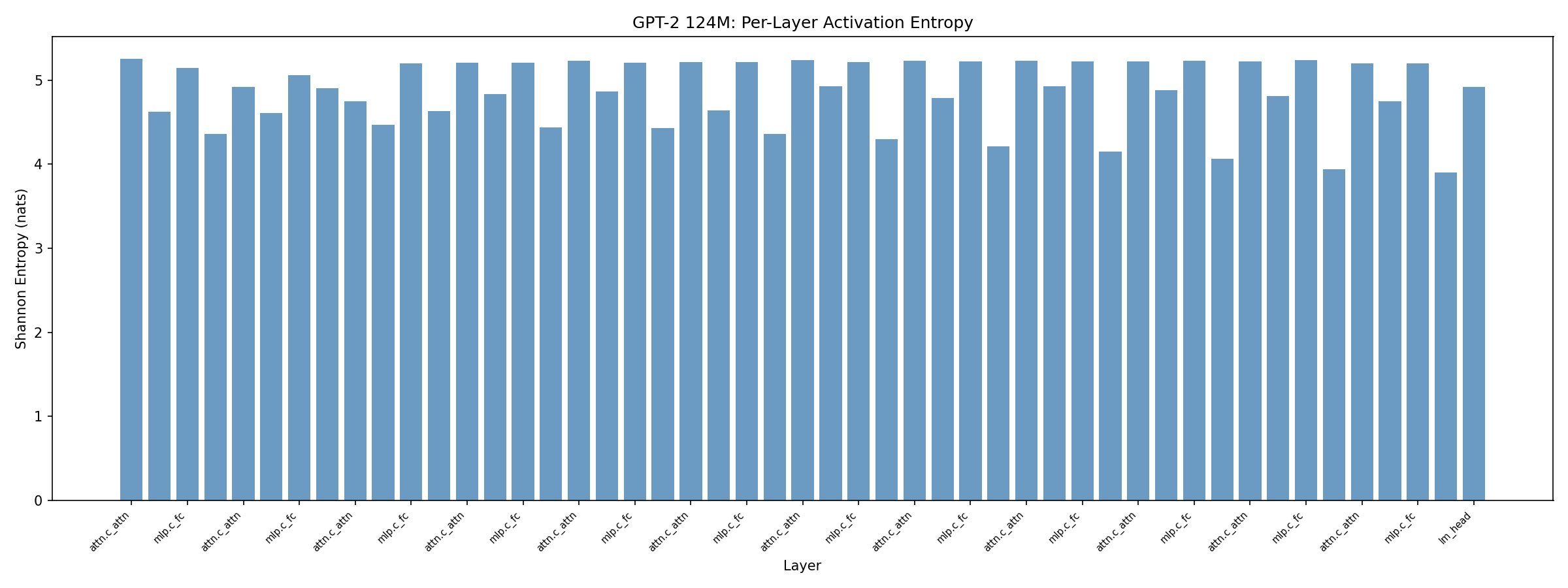

Idea: Layers with high activation entropy carry more information and need more bits. Low-entropy layers can be aggressively quantized.

Method: Collect per-layer activation entropy via streaming histograms on 128 WikiText-2 calibration samples. Allocate bits proportionally: high entropy → more bits, low entropy → fewer bits, constrained to 4-bit mean.

Results (Absmax Quantization)

| Method | Avg Bits | Perplexity | vs Uniform |

|---|---|---|---|

| FP32 baseline | 32 | 29.95 | — |

| Uniform 4-bit | 4.0 | 12,196 | 1.0× |

| Entropy-linear 4-bit | 4.0 | 4,730 | 2.6× better |

| Hessian-linear 4-bit | 4.0 | 16,089 | 0.76× (worse) |

Entropy allocation is 2.6× better than uniform at the same total bit budget.

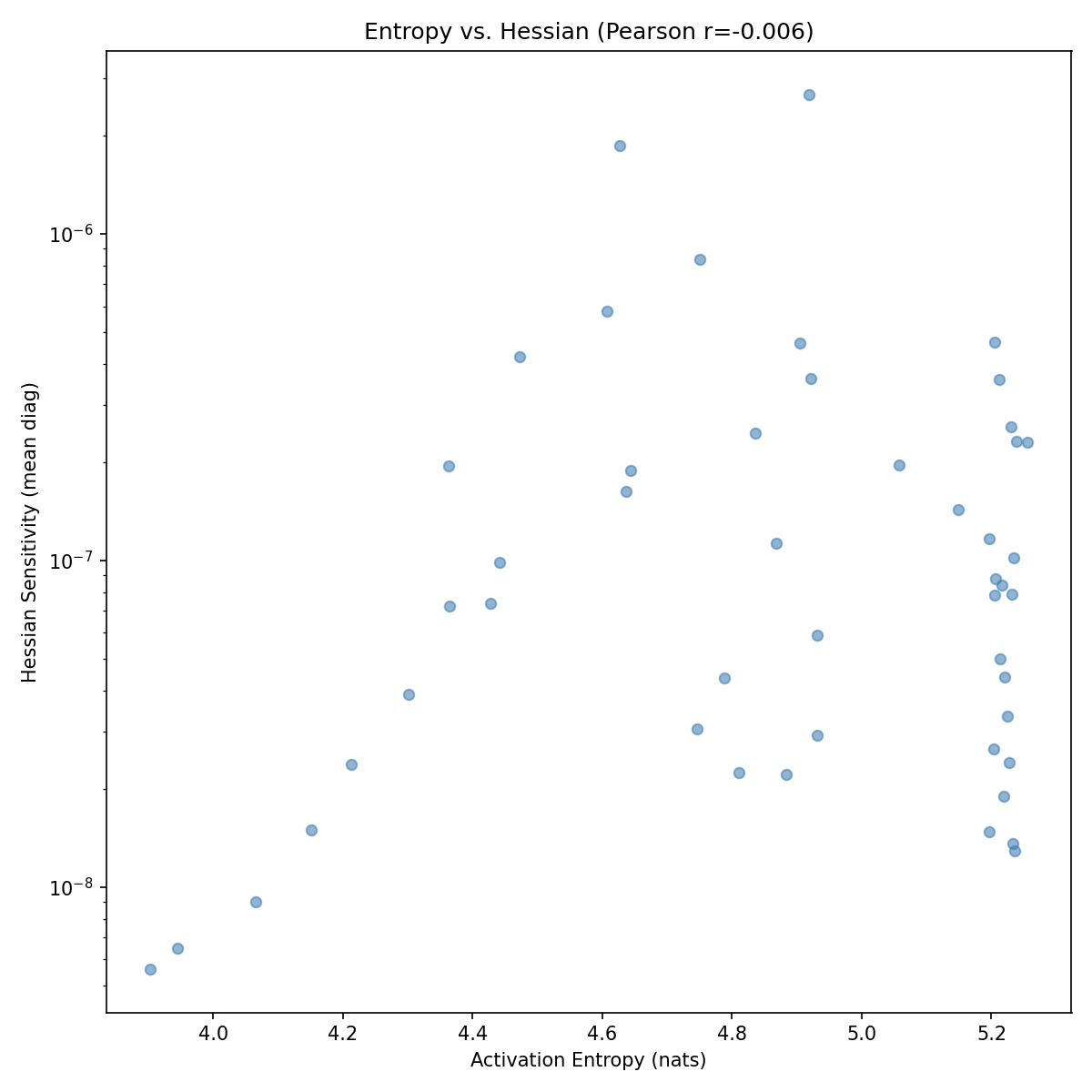

Surprise: Entropy and Hessian Are Orthogonal

We also computed diagonal Fisher (Hessian) sensitivity per layer and tested the correlation with entropy:

- Pearson r = −0.006 (essentially zero)

- Spearman r = 0.082 (negligible)

Entropy (information content) and Hessian sensitivity (loss impact) measure completely different things. This is novel — the field assumes Hessian-based methods capture the important signal, but entropy captures something orthogonal.

GPTQ Changes the Story

When we replaced naive absmax with GPTQ (Cholesky-based Hessian error compensation):

| Method | PPL |

|---|---|

| GPTQ uniform 4-bit | 985 |

| GPTQ entropy [3,6] | 1,500 |

| GPTQ entropy [2,8] | 2,677 |

GPTQ’s Hessian compensation makes entropy redundant. Uniform wins because GPTQ already handles per-layer sensitivity. Entropy allocation is most valuable in the low-sophistication quantization regime where you can’t afford Hessian computation.

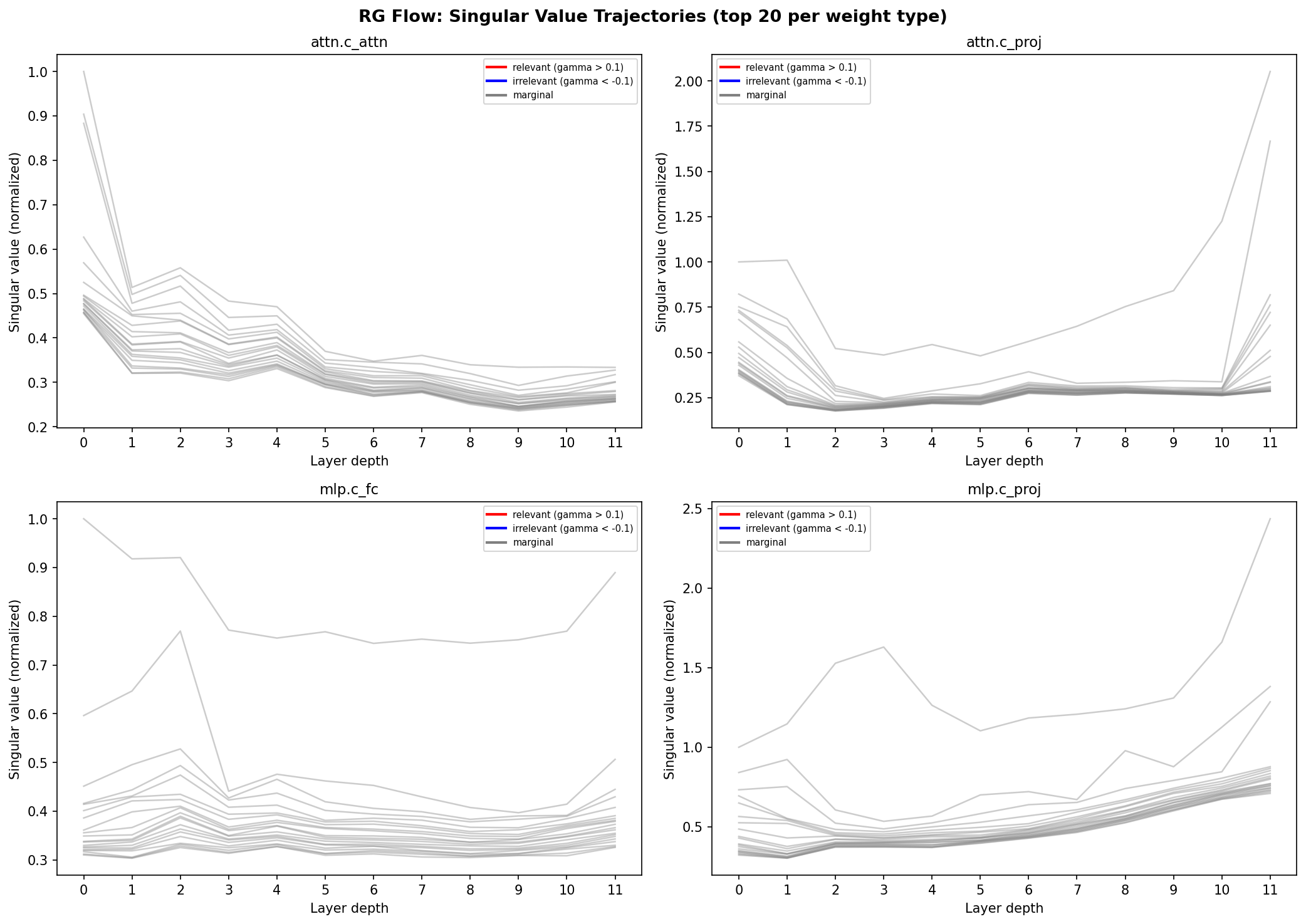

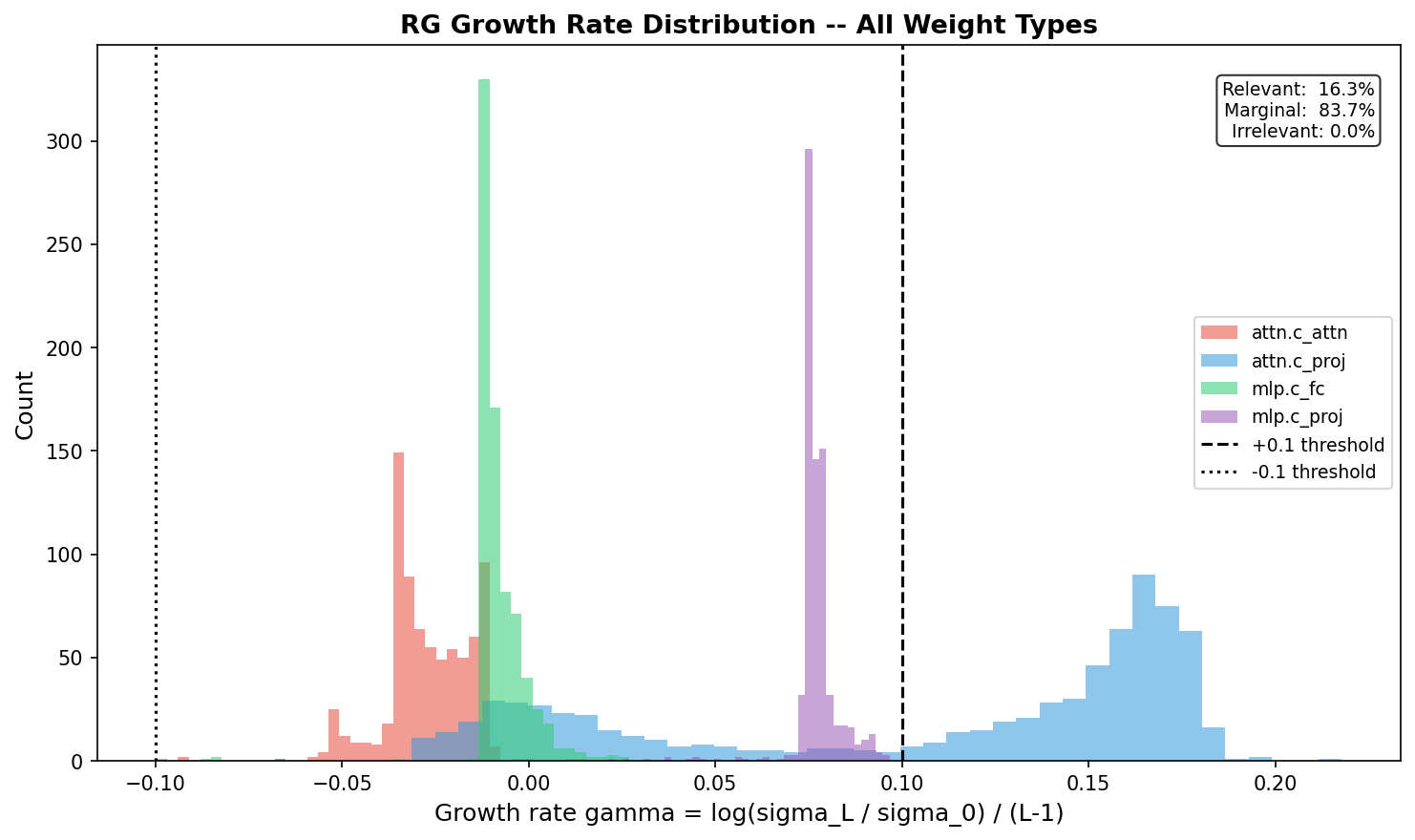

Direction 2: Renormalization Group Flow Analysis

Idea: In RG theory, “relevant operators” grow under coarse-graining while “irrelevant operators” decay. If transformers implement RG, singular values of weight matrices should show growth/decay patterns across layers — telling us which weights to prune.

Method: Compute SVD of each weight matrix type across all 12 GPT-2 layers. Track singular value trajectories and compute growth rates.

Results

| Weight Type | RG Classification | Mean Growth Rate | Pruning Safety |

|---|---|---|---|

| attn.c_proj | 65% relevant (growing) | +0.108 | Dangerous to prune |

| mlp.c_proj | Marginal | +0.077 | Moderate risk |

| attn.c_attn | Marginal (decay) | −0.027 | Safer to prune |

| mlp.c_fc | Fixed point | −0.008 | Safest to prune |

The growth rate distribution is multimodal — four distinct peaks for four weight types, not Gaussian noise.

Key insight: Output projections accumulate and amplify signal through the residual stream (relevant operators). Input projections are scale-invariant (marginal/fixed point). This gives a physics-principled pruning strategy: compress input projections aggressively, leave output projections intact.

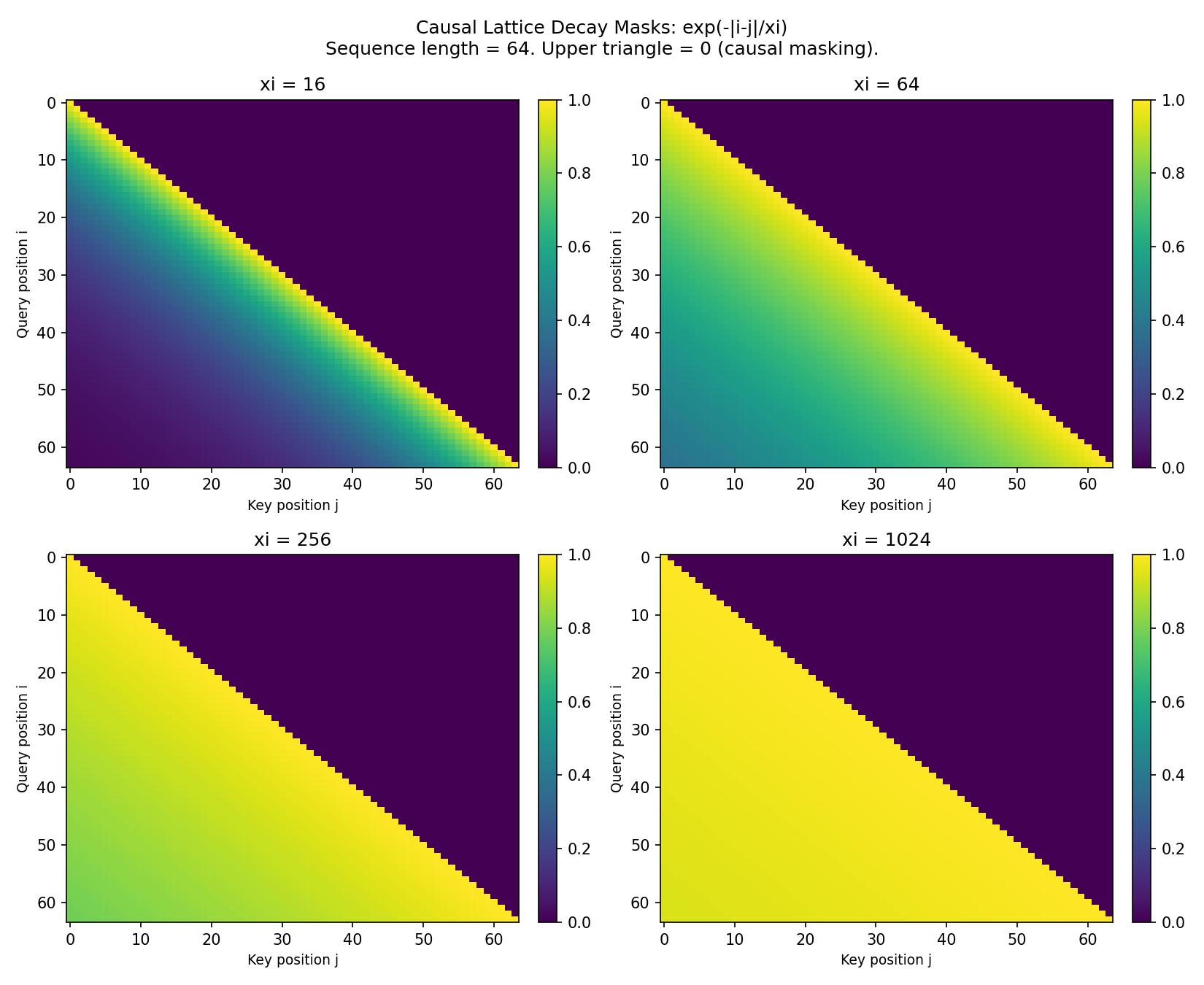

Direction 3: Lattice-Structured Attention

Idea: Replace full O(n²) attention with a physics-motivated decay kernel: a(i,j) ∝ exp(−|i−j|/ξ). This is the Green’s function of a 1D lattice — it describes how correlations decay with distance.

Method: Multiply standard attention scores by the lattice decay mask before softmax. Sweep correlation length ξ from 16 to 1024 tokens.

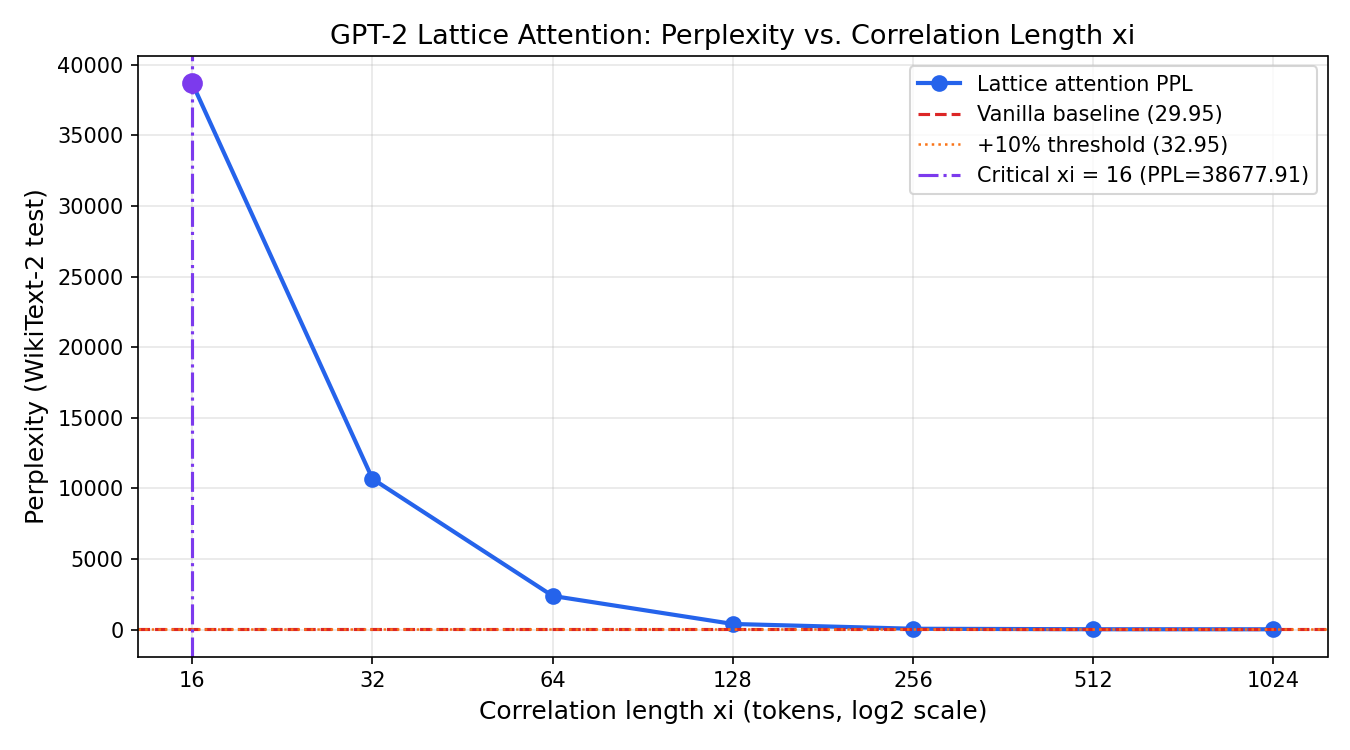

Results: A Phase Transition

| ξ (tokens) | Perplexity | vs Vanilla |

|---|---|---|

| 1024 | 30.20 | +0.8% |

| 512 | 32.47 | +8.4% |

| 256 | 68.20 | +128% |

| 128 | 406 | +1,256% |

| 64 | 2,391 | +7,882% |

| 16 | 38,678 | +129,041% |

Sharp phase transition at ξ ≈ 256–512 tokens. GPT-2 is robust down to half its context window, then collapses catastrophically. This is not gradual degradation — it’s a phase transition, the hallmark of a physical system.

This directly confirms the theoretical prediction from Rigollet 2025 (arXiv 2512.01868, ICM 2026) about critical attention scaling.

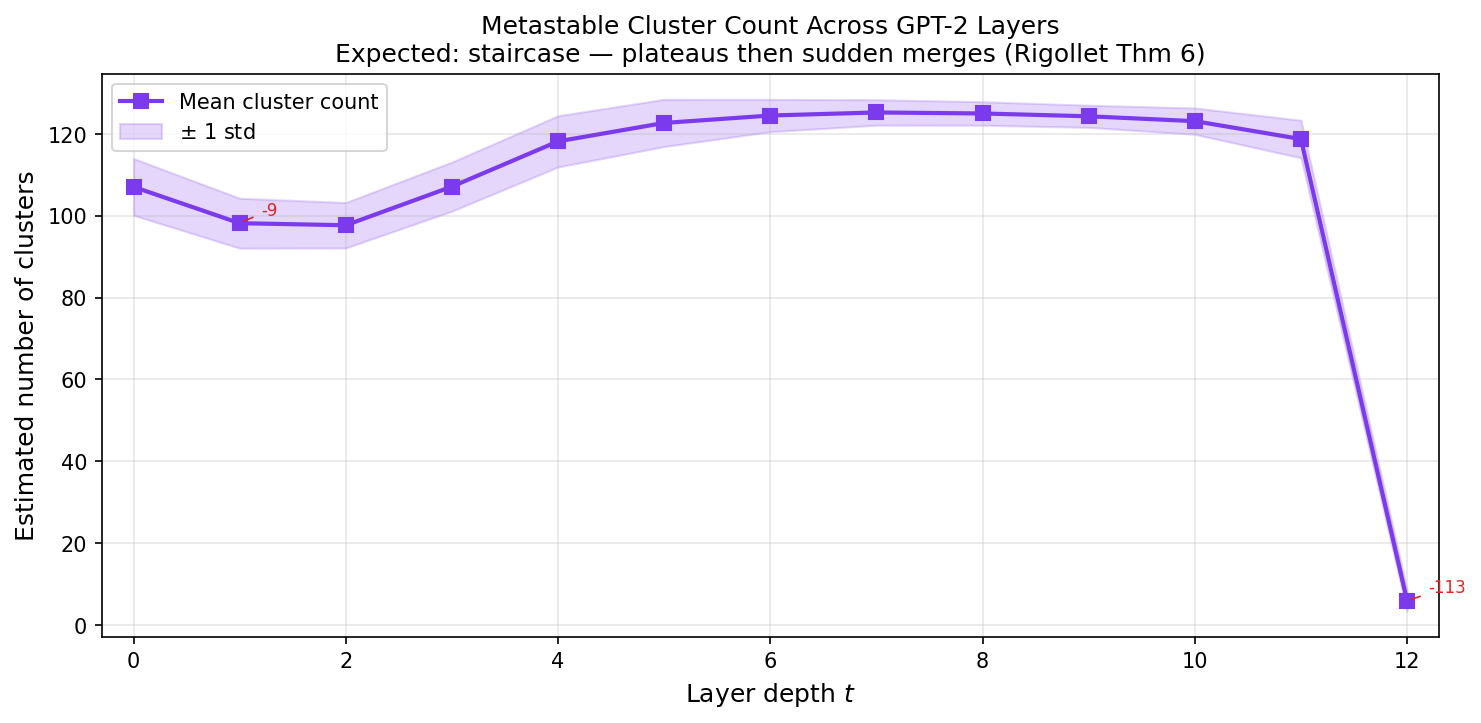

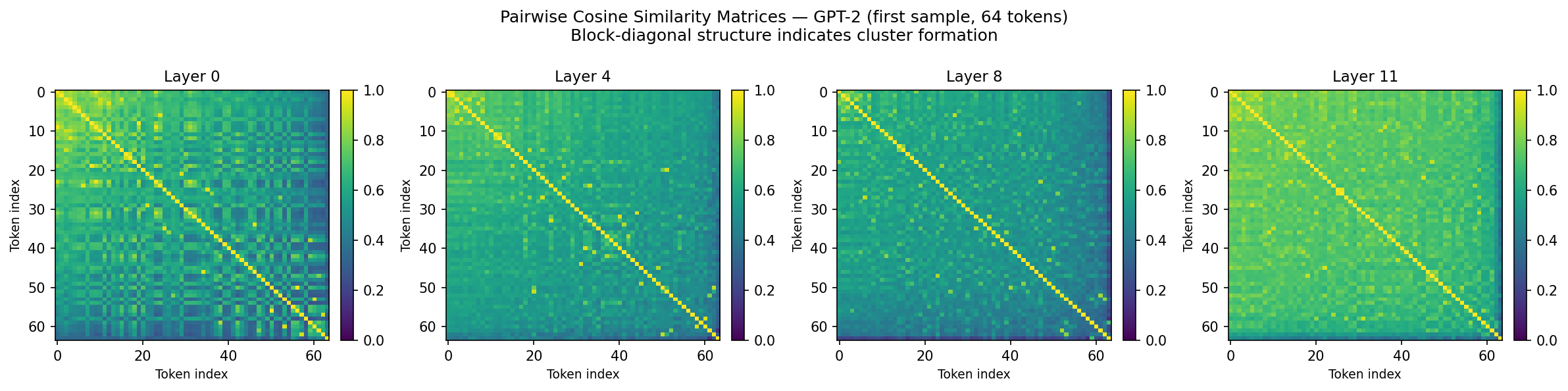

Direction 4: Metastable Cluster Verification

Idea: Rigollet 2025 proves that tokens in transformers form metastable clusters that eventually collapse to full synchronization. We verify this empirically on GPT-2.

Method: Capture hidden states at every layer, compute pairwise cosine similarity and cluster count across depth.

Results

| Layer | Mean Cosine Sim | Clusters |

|---|---|---|

| 0 | 0.488 | 107 |

| 7 | 0.488 | 125 (max diversity) |

| 11 | 0.693 | 119 |

| 12 | 0.936 | 6 (collapse) |

Layers 0–11 maintain ~125 clusters (a long metastable plateau). Layer 12 collapses everything to ~6 clusters in one shot. It’s a single cliff, not Rigollet’s predicted multi-step staircase — GPT-2 may be too shallow for multi-step merging.

The 1/t² polynomial decay predicted for Pre-LN does not fit (R² = −611). Residual connections actively fight mean-field collapse until the final layer.

Combined Compression

We applied all three physics principles simultaneously:

| Method | PPL | Compression |

|---|---|---|

| FP32 baseline | 29.95 | 1.0× |

| Lattice attn (ξ=512) | 32.47 | 1.0× |

| Entropy quant (4-bit) | 4,730 | 2.48× |

| Entropy + RG pruning | 5,515 | 2.63× |

| All three combined | 6,933 | 2.63× |

Entropy + RG pruning are slightly superadditive (combining is less bad than sum of individual costs). But lattice attention interferes with degraded weights — when the model is already damaged by quantization, it becomes more dependent on long-range attention.

Big Picture

Five physics principles, each validated experimentally:

- Entropy tells you how to quantize (which layers need more bits)

- RG flow tells you how to prune (which weight matrices to compress)

- Lattice decay tells you the minimum attention range (~512 tokens for GPT-2)

- Mean-field theory predicts the clustering dynamics (confirmed: metastable plateau + final collapse)

- Hessian and entropy are orthogonal — the field’s standard approach misses half the signal

What’s Next

- Scale to LLaMA-7B to test whether patterns generalize

- Direction 5: DMRG tensor compression — the last unexplored direction

- Combined entropy+Hessian allocator — exploit the orthogonality

Links

- Code: github.com/faulknco/physics-llm-research

- Related: spin-quant — earlier work on k-means codebook quantization

- Key paper: Rigollet 2025, “The Mean-Field Dynamics of Transformers” (arXiv 2512.01868, ICM 2026)